Can we use crowd sourcing to improve AtD?

I’ve written about learning from AtD use in the past. The main ideas I had back then were to bring more data into AtD’s corpus and analyze ignored phrases to find gaps in AtD’s dictionary. I put some time into these ideas but the initial results didn’t look too promising, so I backed off.

Recently, the operator of Online Rechtschreibprüfung 24/7 contacted me. His website offers German spell and grammar checking services (with a beta version using AtD). Neat stuff. Being the nice guy that he is, he is also giving back. His users have the option of marking a spelling mistake as false. He has collected this data and made it available to improve the German After the Deadline dictionary.

This got me to thinking. What could I do to PolishMyWriting.com to help you, help me, improve After the Deadline‘s English checking.

Here are some ideas:

- Add a “Not Misspelled?” menu item for spelling errors. This could collect a list of words that are candidates to be added to AtD’s dictionary, similar to what Online Rechtschreibprüfung 24/7 does.

- Add a “Not an error?” menu item for grammar and style errors. This could collect the error and the context around it.

- Add a “Better suggestion?” menu item for grammar, style, and misused word errors. Here you could input a better suggestion for an error.

These three things are pretty trivial to do. I’d also like to find a way to let users highlight errors that aren’t caught. I don’t have any ideas for magically learning from these suggestions. Right now I’d have to analyze each of them and develop rules to catch these errors.

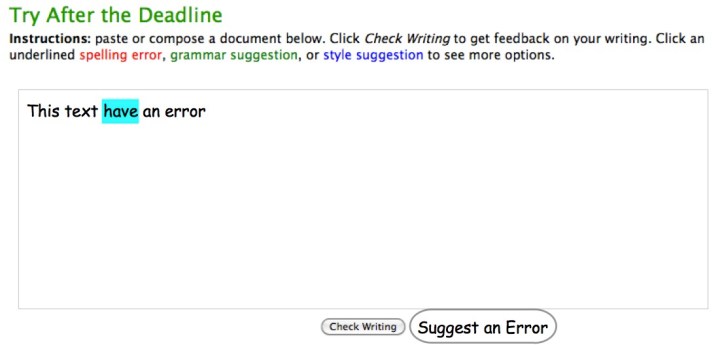

Maybe an option to highlight some text, click Suggest an Error, and complete a short survey about what type of error the text contains.

What are your thoughts?

WordCamp NYC Ignite: After the Deadline

I see that the After the Deadline demonstration for WordCamp NYC has been posted. This short five-minute demonstration covers the plugin and its features.

Before you watch this video, can you find the error in each of these text snippets?

There is a part of me that believes that if I think about these issues, if I put myself through the emotional ringer, I somehow develop an immunity for my own family. Does writing a book about bullying protect your children from being bullied? No. I realize that this kind of thinking is completely ridiculous.’’

[Op-Ed] … Roberts marshaled a crusader’s zeal in his efforts to role back the civil rights gains of the 1960s and ’70s — everything from voting rights to women’s rights.

The success of Hong Kong residents in halting the internal security legislation in 2004, however, had an indirect affect on allowing the vigil here to grow to the huge size it was this year.

These examples come from the After Deadline blog, When Spell-Check Can’t Help. You can watch the video to learn how After the Deadline can help and what the errors are. You can also try these out at http://www.polishmywriting.com.

You can also view the WCNYC session on how embed After the Deadline into an application.

Making the spell checker… more awesome.

I don’t talk much about the After the Deadline spell checker. Many people believe spell checking is a solved problem and they must “work the same way”. AtD does more than spell checking, so I talk about these other things.

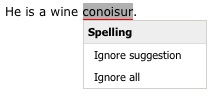

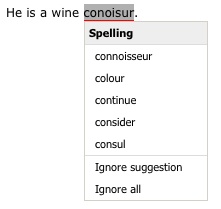

Despite the lack of talk, AtD’s spell checker is awesome and I’m constantly improving it. That’s what I’m going to write about today. This past weekend I was writing a post and I tried to spell “conoisur”. As you call tell, I don’t know how to spell it and my attempt isn’t even close. It’s bad enough that I make spell checkers and can’t spell, it’s terrible that AtD couldn’t give me any suggestions. I felt left out in the cold.

Most spelling errors are one or two changes away from the intended word. AtD takes advantage of this and limits its suggestion search to words within two changes. By limiting the search space for words, AtD has less to choose from. This means AtD give you the right answer more often.

Peter Norvig conducted a quick experiment where he learned that 98.9% of the errors in the Birkbeck spelling error corpus were within two edits and 76% were one edit away. These numbers are close to what I found in my own experiments months ago. I’d present those numbers but I… lost them. *cough*

From these numbers, I felt it was safe to ignore words three or more edits away from the misspelling. This works well except when I try to spell words like connoisseur or bureaucracy. For my attempts, AtD generated no suggestions.

Then it hit me! If there are no suggestions within two edits… why don’t I try to find words within three edits. And if there are still no suggestions then, why I don’t I try words within four edits. I could go on like this forever. Unfortunately nothing is free and doing this would kill AtD’s performance. So I decided to limit my experiment to find words within three edits when no words are within two edits.

AtD’s spellchecker uses a neural network to score suggestions and compare them to each other. During training the neural network converges on an optimal weighting for each feature I give it. One of the features I give to the neural network is the edit distance normalized between 0.0 and 1.0. Edit distance is weighted highly as a value close to 1.0 is correct 76% of the time. I feared that introducing high edit distances that are almost always correct would create something that looks like a valley to the neural network. Meaning a high value is usually correct, a low value is usually correct, and the stuff in the middle is probably not so correct. Neural networks will make good guesses but they’re dependent on being told the story in the right way. This valley thing wasn’t going to work. So I modified the features so that an edit distance of 1 is a weight of 1.0 and everything else is 0.0.

Here are the numbers on AtD’s spell checker before I made these changes:

Word Pool Accuracy

| Data Set | Accuracy |

|---|---|

| sp_test_wpcm_nocontext.txt | 97.80% |

| sp_test_aspell_nocontext.txt | 79.06% |

Spelling Corrector Accuracy

| Data Set | Neural Network | Freq/Edit | Random |

|---|---|---|---|

| sp_test_gutenberg_context1.txt | 93.97% | 83.16% | 29.71% |

| sp_test_gutenberg_context2.txt | 77.14% | 59.68% | 19.68% |

The word pool accuracy is a measure of how often the pool of suggested words AtD generates has the intended word for a misspelled one in the test data. If the correct word is not here, AtD won’t be able to suggest it. The corrector accuracy is a measurement of how accurate AtD is at sorting the suggestion pool and putting the intended word on the top. The neural network score is what AtD uses in production and includes context. The joint frequency/edit distance score is similar to the statistical corrector in How to write a spelling corrector. Without context I don’t expect most spell checkers will do much better than that.

Here are the numbers with the changes:

Word Pool Accuracy

| Data Set | Accuracy |

|---|---|

| sp_test_wpcm_nocontext.txt | 98.19% +0.39% |

| sp_test_aspell_nocontext.txt | 82.58% +3.52% |

Spelling Corrector Accuracy

| Data Set | Neural Network | Freq/Edit | Random |

|---|---|---|---|

| sp_test_gutenberg_context1.txt | 94.51% +0.54% | 83.08% | 30.18% |

| sp_test_gutenberg_context2.txt | 79.38% +2.24% | 60.31% | 23.69% |

What this means for you

These little changes let AtD expand its search for suggestions when none were found. This means better recommendations for hard to spell words without impacting AtD’s stellar correction accuracy. I hope you enjoy it.

How does this compare to MS Word, ASpell, and others?

If you want to compare these numbers with other systems, I presented numbers from similar data in another blog post. Be sure to multiply the spelling corrector accuracy with the word pool accuracy when comparing these numbers in the ones in the other post. For example: 0.9451 * 0.9891 = 0.9348 = 93.48%

If you’d like to play around with spelling correction and neural networks, consider downloading the After the Deadline source code. Everything you need to conduct your own experiments is included and it’s open source. The data sets used here are available in the bootstrap data distribution.

Comments Off on Making the spell checker… more awesome.

Where I’d like to see AtD go…

I often get emails from folks asking for support for different platforms. I love to help folks and I’m very interested in solving a problem. I don’t have the expertise in all the platforms folks want AtD to support. Since it’s my occupation, I plan to keep improving AtD as a service, but here is my wish list of places where I’d like to see AtD wind up:

Wikipedia

I’d like to meet Jimmy Wales, one of the founders of Wikipedia. First because I love Wikipedia and it tickles me pink that so much knowledge is available at my finger tips. I’m from the last generation to grow up with hard bound encyclopedias in my home.

Second, because I’d love to explore how After the Deadline could help Wikipedia. AtD could help raise the quality of writing there.

Since the service will be open source there won’t be an IP cost necessarily. The only barriers are AtD support in the MediaWiki software and server side costs. Fortunately I’ve learned a lot about scaling AtD from working with WordPress.com and given a number of edits/hour and server specs, I could come up with a good guess about how much horsepower is really needed.

I’d love to write the MediaWiki plugin myself but unfortunately I’m so caught up trying to improve the core AtD product that this is beyond my own scope. If anyone chooses to pick this project up, let me know, I’ll help in any way I can.

Online Office Suites and Content Management Systems

There are a lot of people cutting and pasting from Word to their content management systems. There are many web applications either taking over the word processor completely or for niche tasks. For this shift to really happen these providers need to offer proofreading tools that match what the user would get in their word processor. None of us are supposed to depend on automated tools but a lot of us do.

Abiword, KWord, OpenOffice, and Scribus

It’s a tough sell to say a technology like AtD belongs in a desktop word processor. I say this because AtD consumes boatloads of memory. I could adopt it to keep limited amounts of data in memory and swap necessary stuff from the disk. If there isn’t a form of AtD suitable for plugging into these applications, I hope someone clones the project and adapts it to these projects. If someone chooses to port AtD to C, let me know, I’ll probably give a little on my own time and will gladly answer questions.

After the Deadline: Acquired

Today I have big news to announce for After the Deadline. But first, I have to tell you a story.

I left the Air Force in March 2008 to pursue my dream of launching a startup and to finish graduate school.

Coming from the US Air Force Research Lab, I wanted to solve a problem and invent something cool. The most recent problem I had when leaving the Air Force was writing technical reports. I knew what I wanted to say but always had doubts about my style. So I decided to hunker down and write a style checker. I launched this tool as PolishMyWriting.com in July 08 and it went…. nowhere.

Later I wrote to a friend of mine in NYC who showed PolishMyWriting.com to his boss at TheLadders.com. His boss wrote something about it in their customer newsletter and thousands of people came to my site. They processed many documents and wrote to tell me how much this style checking tool helped them. This inspired me. I asked myself “if I’m selling umbrellas, where is it raining?” and I saw an opportunity in the web application space. My goal–bring word processor quality proofreading tools to the web. It was at this moment After the Deadline was born.

I submitted the style checker embedded into TinyMCE as part of an application to Y-Combinator, Spring 09. Later, I was greeted with a rejection letter. But that was ok! I knew I didn’t need permission to start a business. So on I went. I adhered to the proposed schedule and milestones.

By Mar 09, I had a pretty kick ass system going. The spellchecker accuracy was showing potential to rival even MS Word (context makes a big difference) and I knew the style checker was comparable to similar commercial software. My favorite feature though was the misused word detection. Outside of the latest MS Word–no one else really had this.

I applied to several other seed funds and after an encouraging meeting with a seed program in Boston, I found a trusted and qualified friend to handle business development if we were funded. At this time I took some cost cutting measures (*cough*sold everything, moved in with my sister*cough*) to keep going. My partner and I landed an interview and prepped like crazy for it. We weren’t funded and I received feedback that the idea was good but the problem was too hard. I’m thankful we had that interview though and was encouraged to make the first cut.

My short-lived partner went to a real job and I devoted another month to coding and launched in June 09. As part of the promotion process, I posted about AtD to Hacker News. I also left this comment, hoping to impress someone:

This paragraph is from a NY Times article. Can you find the error in it?

Still Mr. Franken said the whole experience had been disconcerting. “It’s a weird thing: people are always asking me and Franni, ‘Are you okay?’ ” he said, referring to his wife. “As sort of life crises go, this is low on the totem poll. But it is weird, it’s a strange thing.”

Neither can the spell checker in your browser. Why? Because most spell checkers do not look at context. After the Deadline does.

Besides misused word detection (and contextual spell checking), After the Deadline checks grammar and style as well.

Visit http://www.polishmywriting.com/nyt.html to see the answer.

That must have worked, because later, I received an email from Matt Mullenweg asking me about bringing After the Deadline to Automattic. Matt and I are both big believers in open source. We like to eat but at the same time see a bigger picture where impact matters. He is also an incredibly smooth chatter and anti-aliasing does wonders for his online presence. I knew this was an opportunity I couldn’t say no to.

And so here I am. We did the deal in July 09 and since then I’ve moved After the Deadline to Automattic’s infrastructure, rewrote the plugin, improved the algorithms, and today it started checking the spelling, style, and grammar for millions of bloggers.

So what’s next?

I’m continuing this natural language processing research under the Automattic banner. We’re planning to expand AtD to support other languages.

After the Deadline will stay free for non-commercial use and we hope to see others build on the service.

And finally, our goal is to raise the quality of writing on the internet and give folks confidence in their voice. We’re planning to open source the After the Deadline engine and the rule-sets that go with it. This will be the most comprehensive proofreading suite available under an open source license. I’m excited about the opportunity to be a part of this contribution.

- WordPress.com Announcement for After the Deadline

- Matt Mullenweg’s Announcement about Automattic Acquiring After the Deadline

And some thanks…

The hardest part of waiting to make this announcement is I haven’t yet had a chance to publicly thank those who believed in this project from the beginning. I’d like to thank Mr. Elmer White for his legal counsel and support. Congratulations on your second grandson. Ms. Hye Yon Yi for her support and putting up with me when I was completely unavailable. Mr. Dug Song, Patron Saint of MI Hackers, for advising me on the business side. Ms. Katrina Campau for coaching me on the investor interview. The A2 New Tech (Ann Arbor, MI) for letting me present and being encouraging. Mr. David Groesbeck and Ms. Michelle Evanson at Why International for being my earliest business cheerleaders. Mr. Brandon Mumby for providing hosting, even after AtD caused a hardware failure. My colleagues, who serve at the Air Force Research Lab, for inspiring me to stay curious and keeping me in the community. The crew in #startups on FreeNode and the Hacker News community–thanks for showing it can be done. Of course my family (esp. my sister who let me turn the basement into a “command center”) and the makers of Mint Chocolate Chip ice-cream.

Tweaking the AtD Spellchecker

Conventional wisdom says a spellchecker dictionary should have around 90,000 words. Too few words and the spellchecker will mark many correct things as wrong. Too many words and it’s more likely a typo could result in a rarely used word going unnoticed by most spellcheckers.

Assembling a good dictionary is a challenge. Many wordlists are available online but often times these are either not comprehensive or they’re too comprehensive and contain many misspellings.

AtD tries to get around this problem by intersecting a collection of wordlists with words it sees used in a corpus ( a corpus is a directory full of books, Wikipedia articles, and blog posts I “borrowed” from you). Currently AtD accepts any word seen once leading to a dictionary of 161,879 words. Too many.

Today I decided to experiment with different thresholds for how many times a word needs to be seen before it’s allowed entrance into the coveted spellchecker wordlist. My goal was to increase the accuracy of the AtD spellchecker and drop the number of misspelled words in the dictionary.

Here are the results, AtD:n means AtD requires a word be seen n times before AtD includes it in the dictionary.

ASpell Dataset (Hard to correct errors)

| Engine | Words | Accuracy * | Present Words |

|---|---|---|---|

| AtD:1 | 161,879 | 55.0% | 73 |

| AtD:2 | 116,876 | 55.8% | 57 |

| AtD:3 | 95,910 | 57.3% | 38 |

| AtD:4 | 82,782 | 58.0% | 30 |

| AtD:5 | 73,628 | 58.5% | 27 |

| AtD:6 | 66,666 | 59.1% | 23 |

| ASpell (normal) | n/a | 56.9% | 14 |

| Word 97 | n/a | 59.0% | 18 |

| Word 2000 | n/a | 62.6% | 20 |

| Word 2003 | n/a | 62.8% | 20 |

Wikipedia Dataset (Easy to correct errors)

| Engine | Words | Accuracy * | Present Words |

|---|---|---|---|

| AtD:1 | 161,879 | 87.9% | 233 |

| AtD:2 | 116,876 | 87.8% | 149 |

| AtD:3 | 95,910 | 88.0% | 104 |

| AtD:4 | 82,782 | 88.3% | 72 |

| AtD:5 | 73,628 | 88.3% | 59 |

| AtD:6 | 66,666 | 88.62% | 48 |

| ASpell (normal) | n/a | 84.7% | 44 |

| Word 97 | n/a | 89.0% | 31 |

| Word 2000 | n/a | 92.5% | 42 |

| Word 2003 | n/a | 92.6% | 41 |

Experiment data and comparison numbers from: Deorowicz, S., Ciura M. G., Correcting spelling errors by modelling their causes, International Journal of Applied Mathematics and Computer Science, 2005; 15(2):275–285.

* Accuracy numbers show spell checking without context as the Word and ASpell checkers are not contextual (and therefor the data isn’t either).

After seeing these results, I’ve decided to settle on a threshold of 2 to start and I’ll move to 3 after no one complains about 2.

I’m not too happy that the present word count is so sky high but as I add more data to AtD and up the minimum word threshold this problem should go away. This is progress though. Six months ago I had so little data I wouldn’t have been able to use a threshold of 2, even if I wanted to.

1 comment