Making the spell checker… more awesome.

I don’t talk much about the After the Deadline spell checker. Many people believe spell checking is a solved problem and they must “work the same way”. AtD does more than spell checking, so I talk about these other things.

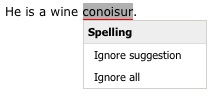

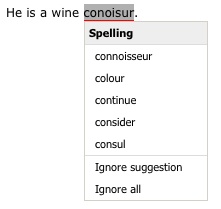

Despite the lack of talk, AtD’s spell checker is awesome and I’m constantly improving it. That’s what I’m going to write about today. This past weekend I was writing a post and I tried to spell “conoisur”. As you call tell, I don’t know how to spell it and my attempt isn’t even close. It’s bad enough that I make spell checkers and can’t spell, it’s terrible that AtD couldn’t give me any suggestions. I felt left out in the cold.

Most spelling errors are one or two changes away from the intended word. AtD takes advantage of this and limits its suggestion search to words within two changes. By limiting the search space for words, AtD has less to choose from. This means AtD give you the right answer more often.

Peter Norvig conducted a quick experiment where he learned that 98.9% of the errors in the Birkbeck spelling error corpus were within two edits and 76% were one edit away. These numbers are close to what I found in my own experiments months ago. I’d present those numbers but I… lost them. *cough*

From these numbers, I felt it was safe to ignore words three or more edits away from the misspelling. This works well except when I try to spell words like connoisseur or bureaucracy. For my attempts, AtD generated no suggestions.

Then it hit me! If there are no suggestions within two edits… why don’t I try to find words within three edits. And if there are still no suggestions then, why I don’t I try words within four edits. I could go on like this forever. Unfortunately nothing is free and doing this would kill AtD’s performance. So I decided to limit my experiment to find words within three edits when no words are within two edits.

AtD’s spellchecker uses a neural network to score suggestions and compare them to each other. During training the neural network converges on an optimal weighting for each feature I give it. One of the features I give to the neural network is the edit distance normalized between 0.0 and 1.0. Edit distance is weighted highly as a value close to 1.0 is correct 76% of the time. I feared that introducing high edit distances that are almost always correct would create something that looks like a valley to the neural network. Meaning a high value is usually correct, a low value is usually correct, and the stuff in the middle is probably not so correct. Neural networks will make good guesses but they’re dependent on being told the story in the right way. This valley thing wasn’t going to work. So I modified the features so that an edit distance of 1 is a weight of 1.0 and everything else is 0.0.

Here are the numbers on AtD’s spell checker before I made these changes:

Word Pool Accuracy

| Data Set | Accuracy |

|---|---|

| sp_test_wpcm_nocontext.txt | 97.80% |

| sp_test_aspell_nocontext.txt | 79.06% |

Spelling Corrector Accuracy

| Data Set | Neural Network | Freq/Edit | Random |

|---|---|---|---|

| sp_test_gutenberg_context1.txt | 93.97% | 83.16% | 29.71% |

| sp_test_gutenberg_context2.txt | 77.14% | 59.68% | 19.68% |

The word pool accuracy is a measure of how often the pool of suggested words AtD generates has the intended word for a misspelled one in the test data. If the correct word is not here, AtD won’t be able to suggest it. The corrector accuracy is a measurement of how accurate AtD is at sorting the suggestion pool and putting the intended word on the top. The neural network score is what AtD uses in production and includes context. The joint frequency/edit distance score is similar to the statistical corrector in How to write a spelling corrector. Without context I don’t expect most spell checkers will do much better than that.

Here are the numbers with the changes:

Word Pool Accuracy

| Data Set | Accuracy |

|---|---|

| sp_test_wpcm_nocontext.txt | 98.19% +0.39% |

| sp_test_aspell_nocontext.txt | 82.58% +3.52% |

Spelling Corrector Accuracy

| Data Set | Neural Network | Freq/Edit | Random |

|---|---|---|---|

| sp_test_gutenberg_context1.txt | 94.51% +0.54% | 83.08% | 30.18% |

| sp_test_gutenberg_context2.txt | 79.38% +2.24% | 60.31% | 23.69% |

What this means for you

These little changes let AtD expand its search for suggestions when none were found. This means better recommendations for hard to spell words without impacting AtD’s stellar correction accuracy. I hope you enjoy it.

How does this compare to MS Word, ASpell, and others?

If you want to compare these numbers with other systems, I presented numbers from similar data in another blog post. Be sure to multiply the spelling corrector accuracy with the word pool accuracy when comparing these numbers in the ones in the other post. For example: 0.9451 * 0.9891 = 0.9348 = 93.48%

If you’d like to play around with spelling correction and neural networks, consider downloading the After the Deadline source code. Everything you need to conduct your own experiments is included and it’s open source. The data sets used here are available in the bootstrap data distribution.

Statistical Grammar Correction or Not…

One thing that makes AtD so powerful is using the right tool for the right job. AtD uses a rule-based approach to find some errors and a statistical approach to find others. The misused word detection feature is the heaviest user of the statistical approach.

Last week I made great progress on the misused word detection making it look at the two words (trigrams) before the phrase in question instead of just one (bigrams). There were several memory challenges to get past to make this work in production, but it happened and I was happy with the results.

Before this, I just looked at the one word before and after the current word. All well and good but for certain kinds of words (too/to, their/there, your/you’re) this proved useless as it almost always guessed wrong. For these words I moved to a rule-based approach. Rules are great because they work and they’re easy to make. The disadvantage of rules is a lot of them are required to get broad coverage of the errors people really make. The statistical approach is great because it can pick up more cases.

The Experiment

So with the advent of the trigrams I decided to revisit the statistical technology for detecting errors I use rules for. I focused on three types of errors just to see what kind of difference there was. These types were:

- Wrong verb tense (irregular verbs only) – Writers tend to have trouble with irregular verbs as each case has to be memorized. Native English speakers aren’t too bad but those just learning have a lot of trouble with these. An example set would be throw vs. threw vs. thrown vs. throws vs. throwing.

- Agreement errors (irregular nouns only) – Irregular nouns are nouns that have plural and singular cases one has to memorize. You can’t just add an s to convert them. An example is die vs. dice.

- Confused words – And finally there are several confused words that the statistical approach just didn’t do much good for. These include it’s/its, their/there, and where/were.

You’ll notice that each of these types of errors relies on fixed words with a fixed set of alternatives. Perfect for use with the misused word detection technology.

My next step was to generate datasets for training and evaluating a neural network for each of these errors. With this data I then trained the neural network and compared the test results of this neural network (Problem AI) to how the misused word detector (Misused AI) did as-is against these types of errors. The results surprised me.

The Results

| Problem AI | Misused AI | |||

|---|---|---|---|---|

| Precision | Recall | Precision | Recall | |

| Irregular Verbs | 86.28% | 86.43% | 84.74% | 84.68% |

| Irregular Nouns | 93.91% | 94.36% | 93.77% | 94.65% |

| Confused Words | 85.38% | 83.64% | 83.85% | 81.90% |

Table 1. Statistical Grammar Checking with Trigrams

First, you’ll notice the results aren’t that great. Imagine what they were like before I had trigrams. 🙂 Actually, I ran those numbers too. Here are the results:

| Problem AI | Misused AI | |||

|---|---|---|---|---|

| Precision | Recall | Precision | Recall | |

| Irregular Verbs | 69.02% | 68.76% | 64.84% | 64.43% |

| Irregular Nouns | 83.66% | 83.66% | 82.93% | 82.93% |

| Confused Words | 80.15% | 77.82% | 76.15% | 73.27% |

Table 2. Statistical Grammar Checking with Bigrams

So, my instinct was at least validated. There was a big improvement going from bigrams to trigrams… just not big enough. What surprised me from these results is how close the AI trained for detecting the misused words performed to the AI trained for the problem at hand.

So one this experiment showed there is no need to train a separate model for dealing with these other classes of errors. There is a slight accuracy difference but it’s not significant enough to justify a separate model.

Real World Results

The experiment shows that all I need (in theory) is a higher bias to protect against false positives. With higher biasing in place (my target is always <0.05% false positives), I decided to run this feature against real writing to see what kind of errors it finds. This is an important step because the tests come from fabricated data. If the mechanism fails to find meaningful errors in a real corpus of writing then it’s not worth adding to AtD.

The irregular verb rules found 500 “errors” in my writing corpus. Some of the finds were good and wouldn’t be picked up by rules, for example:

Romanian justice had no saying whatsoever

[ACCEPT] no, saying -> @('say')

However it will mean that once 6th May 2010 comes around the thumping that Labour get will be historic

[ACCEPT] Labour, get -> @('getting', 'gets', 'got', 'gotten')

Unfortunately, most of the errors are noise. The confused words and nouns have similar stories.

Conclusions

The misused word detection is my favorite feature in AtD. I like it because it catches a lot of errors with little effort on my part. I was hoping to get the same win catching verb tenses and other errors. This experiment showed misused word detection is fine for picking a better fit from a set and I don’t need to train separate models for different types of errors. This gives me another tool to use when creating rules and also gives me a flexible tool to use when trying to mine errors from raw text.

Attempting to Detect and Correct Out of Place Plurals and Singulars

Welcome to After the Deadline Sing-A-Long. I’m constantly trying different experiments to catch my (and occasionally your) writing errors. When AtD is open sourced, you can play along at home. Read on to learn more.

Today, I’m starting a new series on this blog where I will show off experiments I’m conducting with AtD and sharing the code and ideas I use to make them happen. I call this After the Deadline Sing-A-Long. My hope is when I finally tar up the sourcecode and make AtD available, you can replicate some of these experiments, try your own ideas, have a discovery, and send them to me.

Detecting and Correcting Plural or Singular Words

One of the mistakes I make most is to accidentally add an ‘s’ to a word making it plural when I really wanted it to be singular. My idea? Why not take every plural verb and noun and convert them to their singular form and see if the singular form is a statistically better fit.

AtD can do this. The grammar and style checker are rule-based but they also use the statistical language model to filter out false positives. To set up this experiment, I created a rule file with:

.*/NNS::word=:singular::pivots=,:singular .*/VBZ::word=:singular::pivots=,:singular

AtD rules each exist on their own line and consist of declarations separated by two colons. The first declaration is a pattern that represents the phrase this rule should match. It can consist of one or more [word pattern]/[tag pattern] sequences. The tag pattern is the part-of-speech (think noun, verb, etc.). After the pattern comes the named declarations. The word= declaration is what I’m suggesting in place of the matched phrase. Here I’m converting any plural noun or verb to a singular form. The pivots specify the parts of the phrase that have changed to inform that statistical filtering I mentioned earlier.

The next step is to create a file with some examples to test with. I generally do this to see if the rule does what I want it to do. Here are two of the sentences I tried:

There are several people I never had a chance to thanks publicly. After the Deadline is a tool to finds errors and correct them.

So, with these rules in place, here is what happened when I tested them:

atd@build:~/atd$ ./bin/testr.sh plural_to_singular.rules examples.txt

Warning: Dictionary loaded: 124264 words at dictionary.sl:50

Warning: Looking at: several|people|I = 0.003616585140061973 at testr.sl:24

Warning: Looking at: several|person|I = 1.1955063236931511E-4 at testr.sl:24

Warning: Looking at: to|thanks|publicly = 1.25339251574261E-6 at testr.sl:24

Warning: Looking at: to|thank|publicly = 1.7004358463574743E-4 at testr.sl:24

There are several people I never had a chance to thanks publicly.

There/EX are/VBP several/JJ people/NNS I/PRP never/RB had/VBD a/DT chance/NN to/TO thanks/NNS publicly/RB

0) [REJECT] several, people -> I

id => 3095c361e8beeb60abebed29fe5657be

pivots => ,:singular

path => @('.*', 'NNS')

word => :singular

1) [ACCEPT] to, thanks -> @('thank')

id => 3095c361e8beeb60abebed29fe5657be

pivots => ,:singular

path => @('.*', 'NNS')

word => :singular

Warning: Looking at: Deadline|is|a = 0.05030783620533642 at testr.sl:24

Warning: Looking at: Deadline|be|a = 0.00804979134152438 at testr.sl:24

Warning: Looking at: to|finds|errors = 0.0 at testr.sl:24

Warning: Looking at: to|find|errors = 0.0024240611254462076 at testr.sl:24

Warning: Looking at: finds|errors|and = 2.5553084536790455E-5 at testr.sl:24

Warning: Looking at: finds|error|and = 3.14416280096839E-4 at testr.sl:24

After the Deadline is a tool to finds errors and correct them.

After/IN the/DT Deadline/NN is/VBZ a/DT tool/NN to/TO finds/NNS errors/NNS and/CC correct/NN them/PRP

0) [REJECT] Deadline, is -> a

id => 1ab5cd35b6146cbecbc31c8b2a6d8e96

pivots => ,:singular

path => @('.*', 'VBZ')

word => :singular

1) [ACCEPT] to, finds -> @('find')

id => 3095c361e8beeb60abebed29fe5657be

pivots => ,:singular

path => @('.*', 'NNS')

word => :singular

2) [ACCEPT] finds, errors -> @('error')

id => 3095c361e8beeb60abebed29fe5657be

pivots => ,:singular

path => @('.*', 'NNS')

word => :singular

And that was that. After I ran the experiment against a more substantial amount of text, I found too many phrases were flagged incorrectly and I didn’t find many legitimate errors of this type. If I don’t see many obvious mistakes when applying a rule against several books, blogs, and online discussions–I ignore the rule.

In this case, the experiment showed this type of rule fails. There are options to make it better:

- Set a directive to raise the statistical threshold

- Try looking at more context (two words out, instead of one)

- Look at the output and try to create rules that look for more specific situations where this error occurs

Tweaking the Misused Word Detector

I’ve been hard at work improving the statistical misused word detection in After the Deadline. Nothing in the world of NLP is ever perfect. If computers understood English we’d have bigger problems like rapidly evolving robots that try to enslave us. I’m no longer interested in making robots to enslave people, that’s why I left my old job.

AtD’s statistical misused word detection relies on a database of confusion sets. A confusion set is a group of words that are likely to be mistaken for each other. An example is cite, sight, and site. I tend to type right when I mean write, but only when I’m really tired.

AtD keeps track of about 1,500 of these likely to be confused words. When it encounters one in your writing, it checks if any of the other words from the confusion set are a statistically better fit. If one of the words is a better fit then your old word is marked as an error and you’re presented with the options.

AtD’s misused word detection uses the immediate left and right context of the word to decide which word is the better fit. I have access to this information using the bigram language model I assembled from public domain books, Wikipedia, and many of your blog entries. Here is how well the statistical misused word detection fares with this information:

| Precision | Recall | |

|---|---|---|

| Gutenberg | 93.11% | 92.37% |

| Wikipedia | 93.64% | 93.04% |

Table 1. AtD Misused Word Detection Using Bigrams

Of course, I’ve been working to improve this. Today I got my memory usage under control and I can now afford to use trigrams or sequences of three words. With a trigram I can use the previous two words to try and predict the third word. Here is how the statistical misused word detection fares with this extra information:

| Precision | Recall | |

|---|---|---|

| Gutenberg | 97.30% | 96.75% |

| Wikipedia | 97.12% | 96.56% |

Table 2. AtD Misused Word Detection Using Bigrams and Trigrams

AtD uses neural networks to decide how to weight all the information fed to it. These tables represent the before and after of introducing trigrams. As you can see the use of trigrams significantly boosts precision (how often AtD is right when it marks a word as wrong) and recall (how often AtD marks a word as wrong when it is wrong).

Lies…

If you think these numbers are impressive (and they’re pretty good), you should know a few things. The separation between the training data and testing data isn’t very good. I generated datasets for training and testing, but both datasets were drawn from text used in the AtD corpus. More clearly put–these numbers assume 100% coverage of all use cases in the English language because all the training and test cases have trigrams and bigrams associated with them. If I were writing an academic paper, I’d take care to make this separation better. Since I’m benchmarking a feature and how new information affects it, I’m sticking with what I’ve got.

This feature is pretty good, and I’m gonna let you see it, BUT…

I have some work to do yet. In production I bias against false positives which hurts the recall of the system. I do this because you’re more likely to use the correct word and I don’t want AtD flagging your words unless it’s sure the word really is wrong.

The biasing of the bigram model put the statistical misused word detection at a recall of 65-70%. I expect to have 85-90% when I’m done experimenting with the trigrams. My target false positive rate is under 0.5% or 1:200 correctly used words identified as wrong.

With any luck this updated feature will be pushed into production tomorrow.

Tweaking the AtD Spellchecker

Conventional wisdom says a spellchecker dictionary should have around 90,000 words. Too few words and the spellchecker will mark many correct things as wrong. Too many words and it’s more likely a typo could result in a rarely used word going unnoticed by most spellcheckers.

Assembling a good dictionary is a challenge. Many wordlists are available online but often times these are either not comprehensive or they’re too comprehensive and contain many misspellings.

AtD tries to get around this problem by intersecting a collection of wordlists with words it sees used in a corpus ( a corpus is a directory full of books, Wikipedia articles, and blog posts I “borrowed” from you). Currently AtD accepts any word seen once leading to a dictionary of 161,879 words. Too many.

Today I decided to experiment with different thresholds for how many times a word needs to be seen before it’s allowed entrance into the coveted spellchecker wordlist. My goal was to increase the accuracy of the AtD spellchecker and drop the number of misspelled words in the dictionary.

Here are the results, AtD:n means AtD requires a word be seen n times before AtD includes it in the dictionary.

ASpell Dataset (Hard to correct errors)

| Engine | Words | Accuracy * | Present Words |

|---|---|---|---|

| AtD:1 | 161,879 | 55.0% | 73 |

| AtD:2 | 116,876 | 55.8% | 57 |

| AtD:3 | 95,910 | 57.3% | 38 |

| AtD:4 | 82,782 | 58.0% | 30 |

| AtD:5 | 73,628 | 58.5% | 27 |

| AtD:6 | 66,666 | 59.1% | 23 |

| ASpell (normal) | n/a | 56.9% | 14 |

| Word 97 | n/a | 59.0% | 18 |

| Word 2000 | n/a | 62.6% | 20 |

| Word 2003 | n/a | 62.8% | 20 |

Wikipedia Dataset (Easy to correct errors)

| Engine | Words | Accuracy * | Present Words |

|---|---|---|---|

| AtD:1 | 161,879 | 87.9% | 233 |

| AtD:2 | 116,876 | 87.8% | 149 |

| AtD:3 | 95,910 | 88.0% | 104 |

| AtD:4 | 82,782 | 88.3% | 72 |

| AtD:5 | 73,628 | 88.3% | 59 |

| AtD:6 | 66,666 | 88.62% | 48 |

| ASpell (normal) | n/a | 84.7% | 44 |

| Word 97 | n/a | 89.0% | 31 |

| Word 2000 | n/a | 92.5% | 42 |

| Word 2003 | n/a | 92.6% | 41 |

Experiment data and comparison numbers from: Deorowicz, S., Ciura M. G., Correcting spelling errors by modelling their causes, International Journal of Applied Mathematics and Computer Science, 2005; 15(2):275–285.

* Accuracy numbers show spell checking without context as the Word and ASpell checkers are not contextual (and therefor the data isn’t either).

After seeing these results, I’ve decided to settle on a threshold of 2 to start and I’ll move to 3 after no one complains about 2.

I’m not too happy that the present word count is so sky high but as I add more data to AtD and up the minimum word threshold this problem should go away. This is progress though. Six months ago I had so little data I wouldn’t have been able to use a threshold of 2, even if I wanted to.

Grammar Checkers – Not so much magic

I once read a quote where an early pioneer of computerized grammar checkers expressed disappointment about how little the technology has evolved. It’s amazing to me how grammar checking is simultaneously simple (rule-based) and complicated. The complicated part is the technologies that make the abstractions about the raw text possible.

It helps to start with, what is a grammar checker? When I write grammar checker here I’m really referring to a writing checker that looks for phrases that represent an error. Despite advances in AI, complete understanding of unstructured text is still beyond the reach of computers. Grammar checkers work by finding patterns that a human created and flagging text that matches these patterns.

Patterns can be as simple as always flagging “your welcome” and suggesting “you’re welcome”. While these patterns are simple to make a checker for, they don’t offer much power. A grammar checker with any kind of coverage would need tens or hundreds of thousands of rules to be useful to anyone.

Realizing this, NLP researchers decided to come up with ways to infer information about text at a higher level and write rules that take advantage of this higher level information. One example is part-of-speech tagging. In elementary school you may have learned grammar by labeling words in a sentence with verb, noun, adjective, etc. Most grammar checkers do this too and the process is called tagging. With part-of-speech information, one rule can capture many writing errors. In After the Deadline, I use part-of-speech tags to find errors where a plural noun is used with a determiner that expects a singular noun e.g. “There is categories”

While tagging gives extra information about each word, the rules we can write are still limited. Some words should be grouped together, for example proper nouns like “New York”. The process of grouping words that belong together is known as chunking. Through chunking rule writers can have more confidence that what follows a tagged word (or chunk) really has the characteristics (plural, singular) assumed by the rule. For example “There is many categories” should be flagged and a decent chunker makes it easier for a rule to realize the phrase following “There is” represents a plural noun phrase.

The next abstraction is the full parse. A full-parse is where the computer tries to infer the subject, object, and verbs in a sentence. The structure of the sentence is placed into a tree-like data structure that the rules can refer to at will. With a full-parse a grammar checker can offer suggestions that drastically restructure the sentence (e.g. reword passive voice), decide which sentences make no sense, and find errors that are multiple words apart (subject verb agreement errors).

Regardless of the abstraction level used, grammar checkers are still rule-based. The techniques that back these abstractions can become very sophisticated. It seems much research has focused on improving these abstractions.

To move grammar checking to a new level, I expect we will need new abstractions one can use when writing rules. I also expect developing techniques to automatically mine rules will be a valuable research subject.

Comments Off on Making the spell checker… more awesome.